Telemetry data is a powerful tool for understanding the behavior of complex systems. OpenTelemetry provides a platform-agnostic, open-source way to collect, process, and store telemetry data.

This post explores the OpenTelemetry collector architecture, specifically focusing on the Collectors component. We’ll look at how collectors work and how they can be used to process telemetry data from any system or application.

We’ll also discuss some benefits of using OpenTelemetry for your telemetry needs.

OpenTelemetry Architecture Overview

OpenTelemetry is a CNCF project that provides a single set of APIs, libraries, agents, and collectors to capture data from your applications and send them to any monitoring backend.

Source: Medium.com

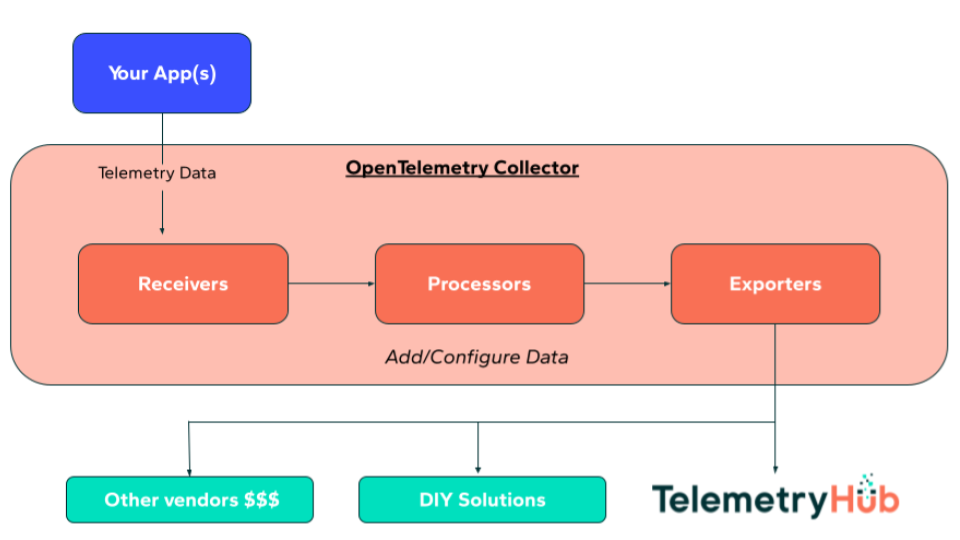

At a high level, the OpenTelemetry architecture can be divided into three parts:

- The SDK: Used by instrumented applications to generate telemetry data. The SDK is available in a variety of programming languages.

- The collectors: Responsible for processing and exporting telemetry data from instrumented applications.

- The exporters: Send processed telemetry data to various monitoring backends (e.g., TelemetryHub, Jaeger).

Proto

OpenTelemetry uses Protocol Buffers (ProtoBuf) as its messaging format and gRPC for communication between the SDK and collectors.

ProtoBuf is a compact, binary format that is language-neutral, making it an ideal choice for interoperability between various programming languages.

gRPC is a high-performance RPC framework that offers excellent features such as bidirectional streaming and low latency.

Specification

The OpenTelemetry specification defines the interfaces and data model the SDK and collectors must implement. The specification is designed to be extensible to accommodate a wide range of telemetry data types.

OpenTelemetry’s core data model is based on the concept of spans. A span represents a single operation in a trace. Spans can be nested to represent causality between operations.

In addition to spans, the OpenTelemetry data model includes other types of telemetry data, such as metrics and logs.

The specification defines how these various data types are represented in the ProtoBuf format and how they are exchanged over gRPC.

Collector

The functionality of the collectors component is defined in the OpenTelemetry Collector specification. The collectors component is responsible for processing and exporting telemetry data from instrumented applications.

Collectors are designed to be highly extensible and modular. They can be deployed as standalone components or as part of a more extensive telemetry system. In addition, collectors can be configured to process data from multiple sources and export it to multiple destinations.

Instrumentation

Instrumentation is the process of adding instrumentation code to an application to generate telemetry data. The OpenTelemetry SDK provides APIs and libraries that make it easy to instrument applications.

In addition to the SDK, there are many third-party libraries that provide instrumentation for specific programming languages and frameworks.

OpenTelemetry is designed to work with any instrumentation library, allowing you to choose the best option for your application.

Collector Architecture

Receiver

The first component of a collector is the receiver. The receiver is responsible for receiving telemetry data from instrumented applications and sending it to the processor.

The importance of the receiver cannot be overstated. It is the point at which telemetry data enters the system, and, as such, it must be able to handle a high volume of data with low latency. To achieve this, the receiver must be highly scalable and fault-tolerant.

Processor

The processor is the heart of the collector. It is responsible for processing telemetry data and exporting it to one or more exporters. Also, the processor can perform various transformations on the data, such as aggregation and filtering.

The processor is a multi-threaded component that can scale horizontally to meet the needs of high-volume data processing. The processor must be designed for high performance so that it does not become a bottleneck in the system.

Exporters

The exporter is responsible for sending processed telemetry data to a monitoring backend. OpenTelemetry supports various monitoring backends, including Prometheus, Jaeger, and TelemetryHub. It’s important to note that tools such as Prometheus, Zipkin, and Jaeger are more DIY solutions (i.e. manual dashboard building) verse out-of-the-box solutions such as TelemetryHub (i.e. no manual dashboarding necessary).

Exporters play a significant role in the overall performance of a collector. They must be designed for high performance to handle a large volume of data with low latency.

Advantages of Using the OpenTelemetry Collector

OpenTelemetry provides many benefits over traditional approaches to collecting and processing telemetry data.

Open-source

OpenTelemetry is open-source and vendor-neutral. You can use it with any monitoring backend without being locked into a specific vendor or platform.

This is because the OpenTelemetry Collector is designed to be highly extensible. It can be configured to work with any monitoring backend that supports the collector’s data format.

Additionally, the OpenTelemetry SDK is available in various programming languages, making it easy to instrument applications written in any language.

Support for multiple data formats

OpenTelemetry supports multiple telemetry data formats. This means you can collect data from various sources, including logs, metrics, and traces.

The OpenTelemetry Collector can be configured to process data from multiple sources and export it to multiple destinations. This makes it easy to aggregate data from multiple systems and send it to a central repository for analysis.

Improved performance

OpenTelemetry collectors are built with efficiency as a primary design consideration. They use a space-saving format known as binary for storing and transmitting data.

In addition, they may be set up to operate in numerous threads simultaneously, making them an ideal choice for applications with large users.

Ease of use

The OpenTelemetry software is simple to use. The software development kit includes application programming interfaces and libraries that make it simple to implement programs.

In addition, the OpenTelemetry Collector was developed to have a high degree of customizability. This makes it easy to adapt the collector to the requirements that you have in mind.

Stronger security

OpenTelemetry ensures the confidentiality of sent data by using an encrypted file format. As a result, your telemetry data will be safe from eavesdropping and other forms of manipulation.

This issue is of utmost significance in distributed systems since data often move across networks that cannot be completely relied upon.

How to Configure the Collector

The OpenTelemetry Collector can be configured to process data from multiple sources and export it to multiple destinations. This section describes configuring the Collector to work with a specific monitoring backend.

Configuring the Collector for TelemetryHub

The assistance that OpenTelemetry offers for TelemetryHub cannot be ignored. You will need to supply the following values in the Collector’s configuration file to set up the collection so that it can export data to TelemetryHub:

receivers:

otlp:

protocols:

http:

grpc:

exporters:

otlp:

endpoint: https://otlp.telemetryhub.com:4317

headers:

x-telemetryhub-key: $YOUR_INGEST_KEY

service:

pipelines:

traces:

receivers: [otlp]

processors: []

exporters: [otlp]

metrics:

receivers: [otlp]

processors: []

exporters: [otlp]

logs:

receivers: [otlp]

processors: []

exporters: [otlp]

To learn more about OpenTelemetry architecture and collectors, visit: https://opentelemetry.io/docs/concepts

Configuring the Collector for Prometheus

OpenTelemetry provides first-class support for Prometheus. To configure the Collector to export data to Prometheus, you need to specify the following settings in the Collector’s configuration file:

exporters:

prometheus:

endpoint: "1.2.3.4:1234"

tls:

ca_file: "/path/to/ca.pem"

cert_file: "/path/to/cert.pem"

key_file: "/path/to/key.pem"

namespace: test-space

const_labels:

label1: value1

"another label": spaced value

send_timestamps: true

metric_expiration: 180m

enable_open_metrics: true

resource_to_telemetry_conversion:

enabled: true

Configuring the Collector for Jaeger

OpenTelemetry has extensive support for Jaeger as well. To configure the Collector to export data to Jaeger, you need to specify the following settings in the Collector’s configuration file:

jaeger_config: service_name: opentelemetry sampling_server_url: http://localhost:5778/sampling sampling_type: const sampling_param: 1 collector_endpoint: http://localhost:14268/api/traces darktrace_config: api_url: https://analytics.dtio.net/api/v1/traces api_token: YOUR-API-TOKEN

Configuring the Collector for Zipkin

The assistance that OpenTelemetry offers for Zipkin are of the highest caliber. To set up the Collector so that data may be sent to Zipkin, you will need to enter the following values in the configuration file for the collection:

zipkin_config: endpoint: http://localhost:9411/api/v2/spans encoding: JSON

Monitoring Backends

OpenTelemetry provides first-class support for monitoring backends, including Prometheus, Jaeger, Zipkin, and TelemetryHub. This way, you can use the backend of your choice without having to worry about compatibility issues.

All of the following backends are supported:

- TelemetryHub

- Prometheus

- Jaeger

- Zipkin

- Amazon Kinesis

- InfluxDB

- M3

- Stackdriver

- V2Ray

OpenTelemetry: The Future of Observability

OpenTelemetry is a fantastic initiative that shows promise in consolidating the many observability landscapes. Although it is still very early in the project’s development, it has already generated a lot of interest and is being used by some of the most well-known companies in the industry.

OpenTelemetry should be on your radar if you search for a unified solution for monitoring and tracing since it provides both of these functions in a single package.

If you want to know more about OpenTelemetry’s architecture and how it is implemented, check out TelemetryHub‘s blog now. We have vast resources that can get you up to speed in no time!